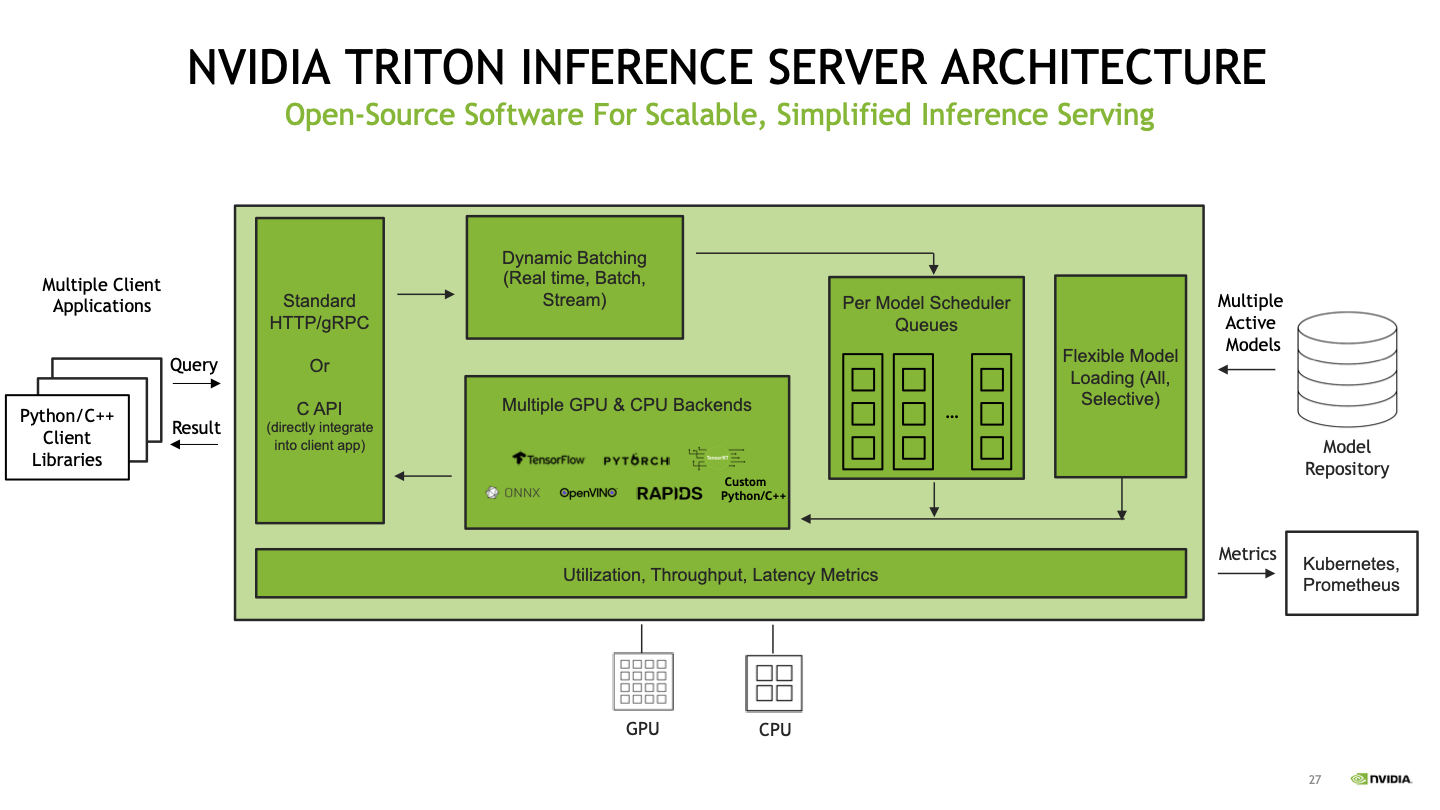

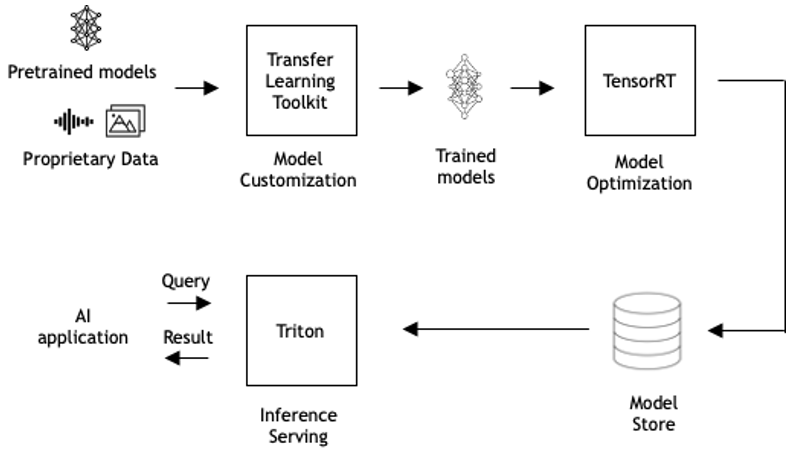

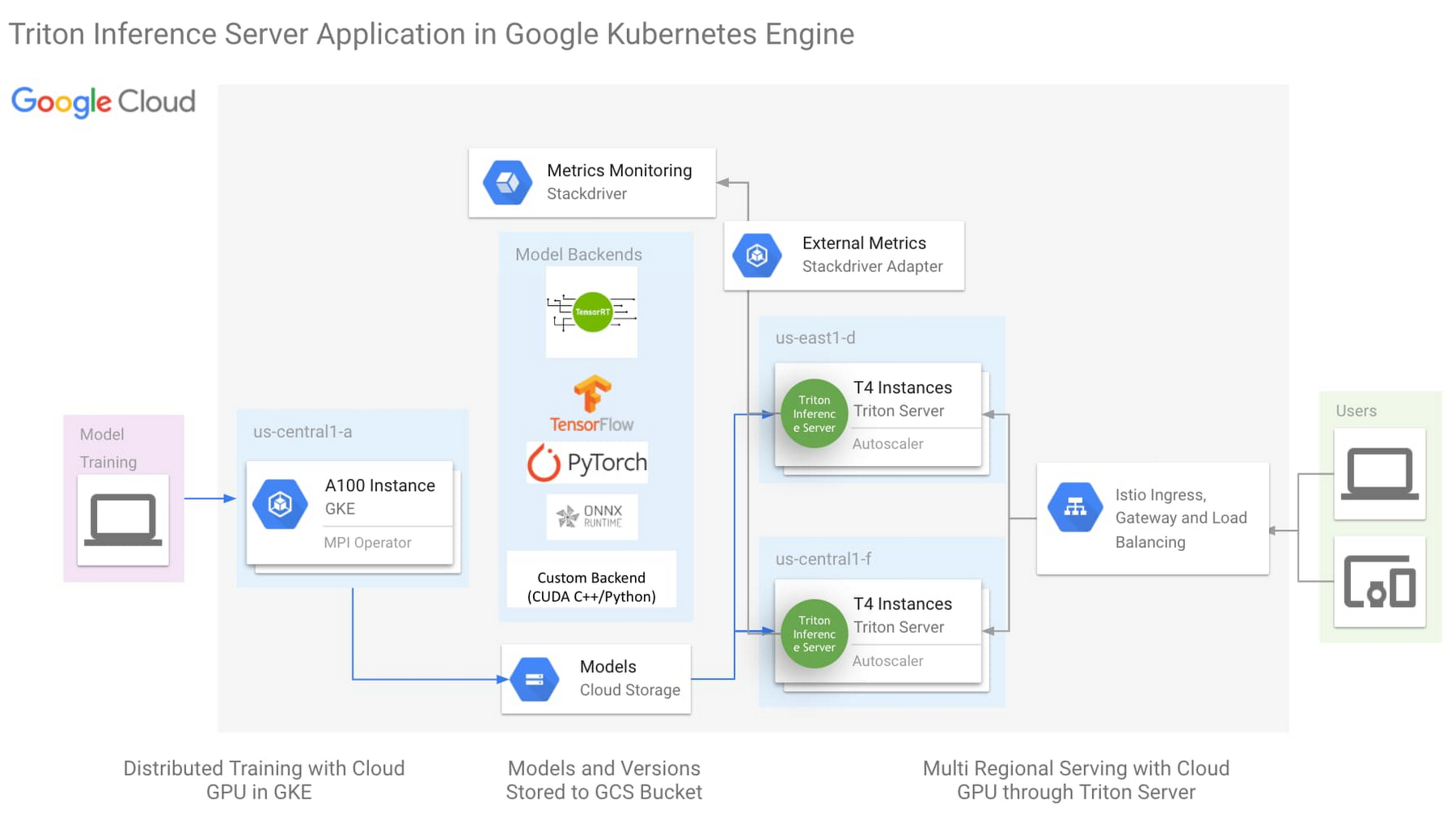

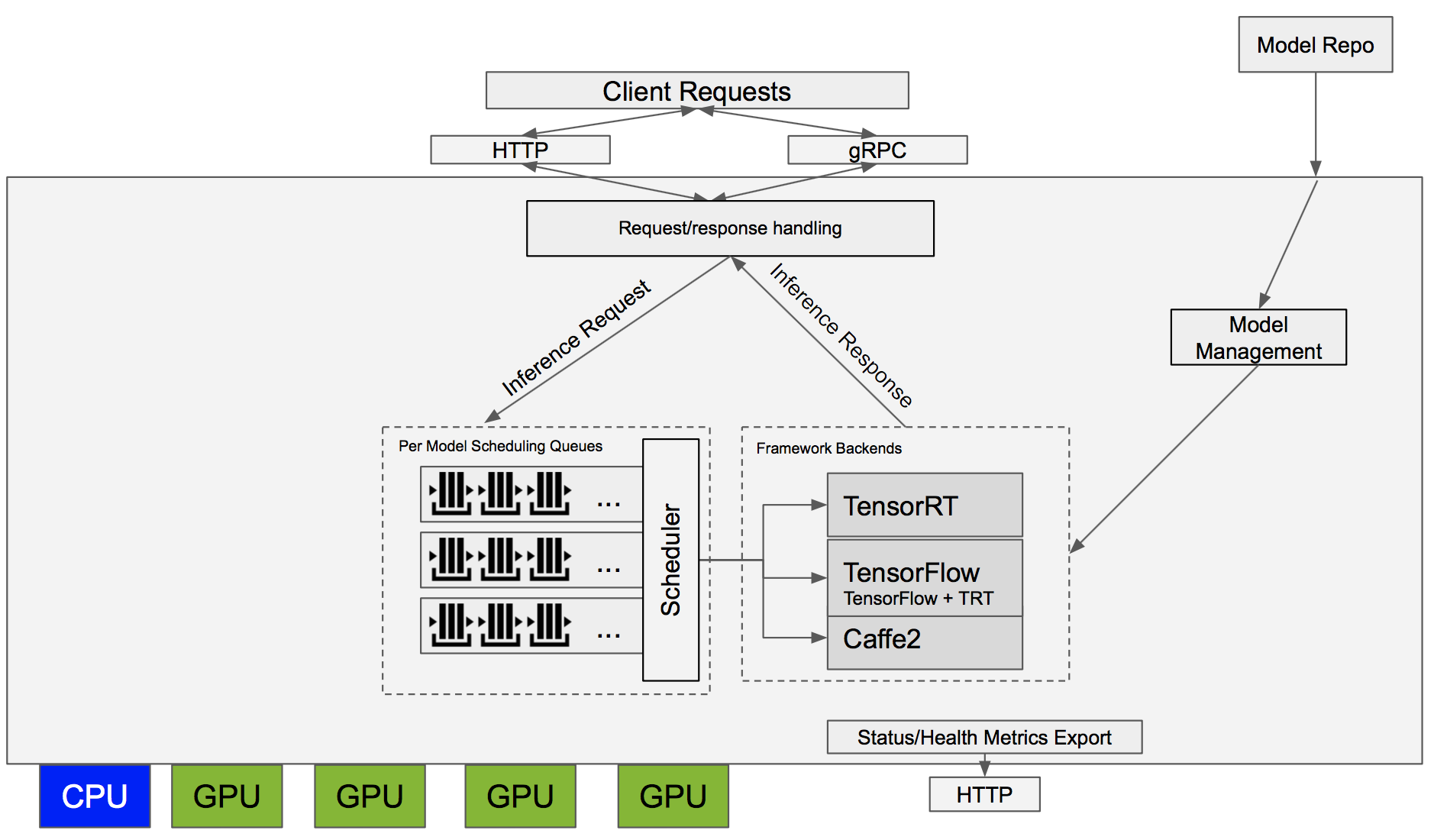

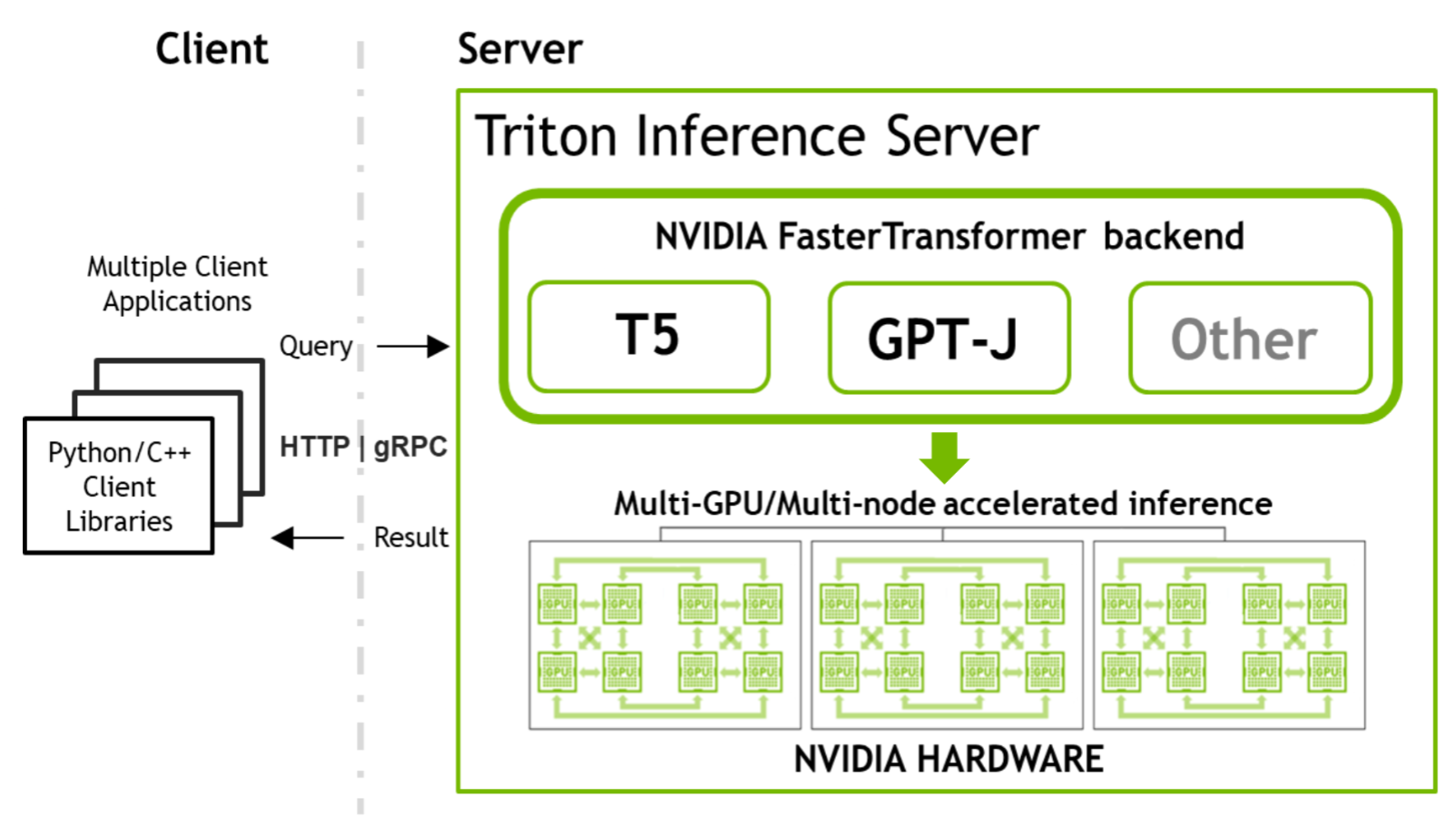

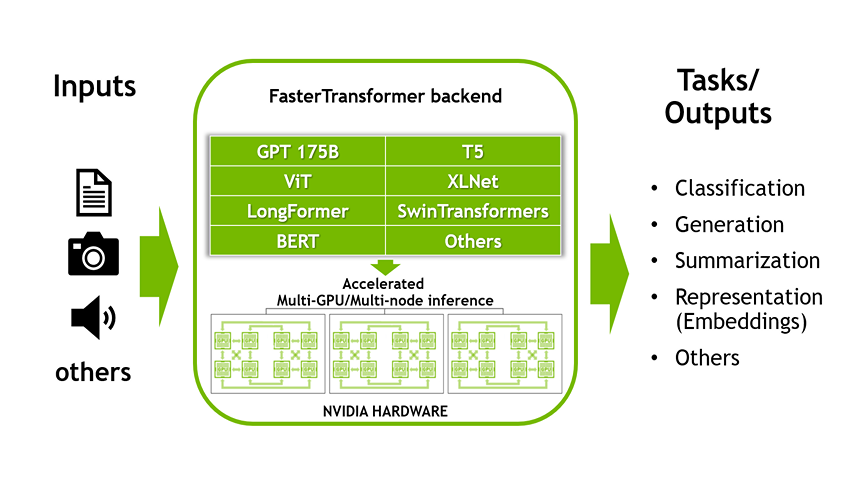

Accelerated Inference for Large Transformer Models Using NVIDIA Triton Inference Server | NVIDIA Technical Blog

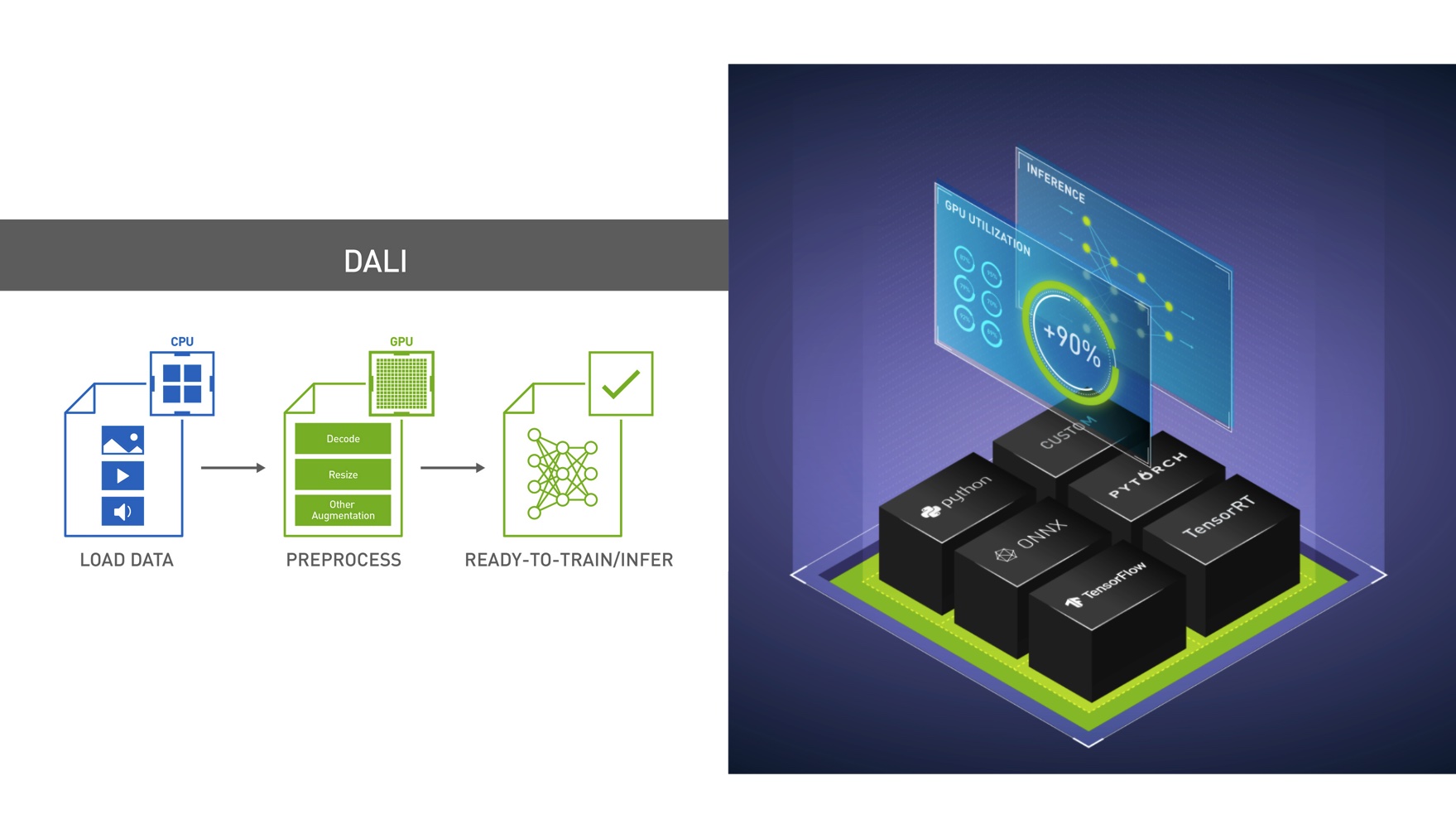

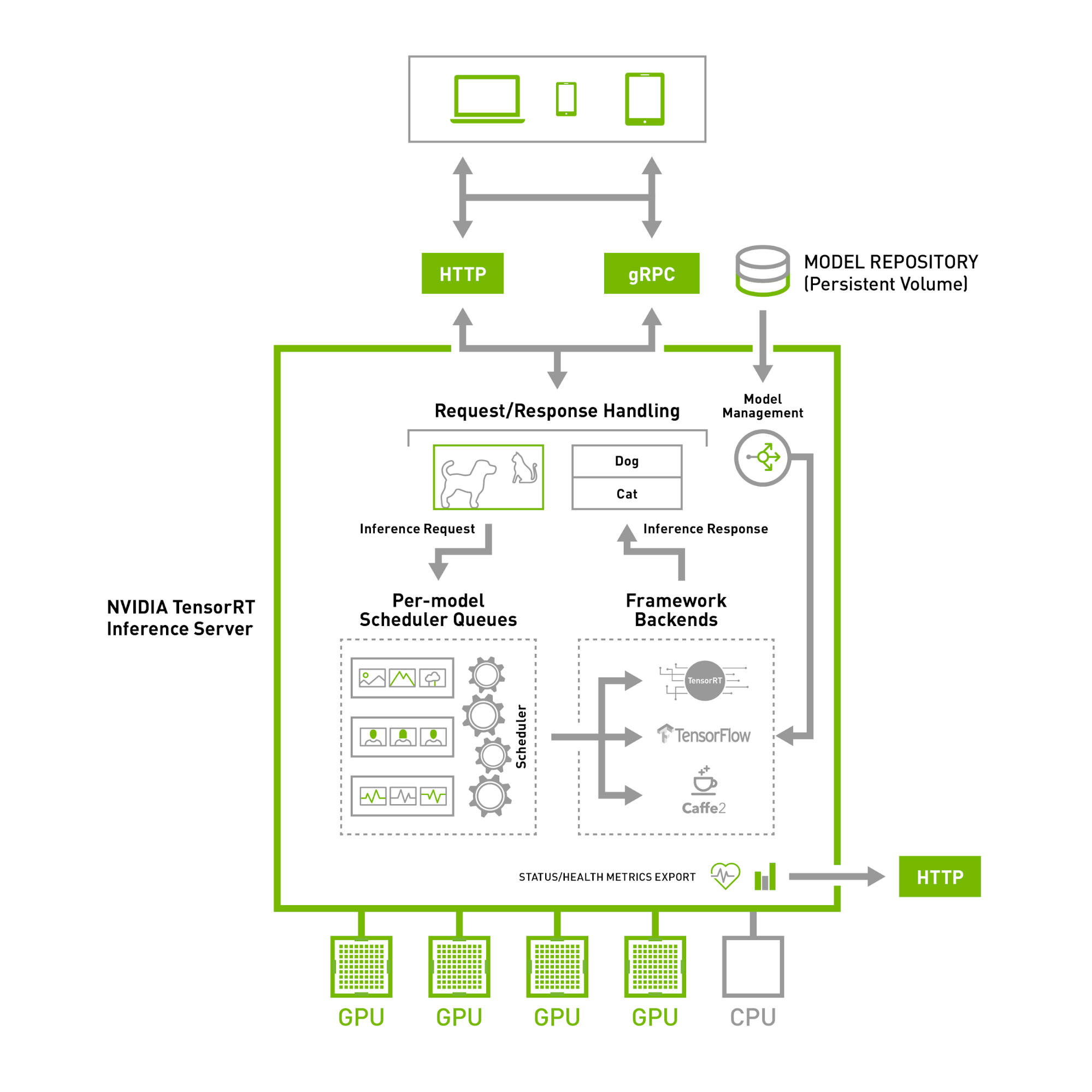

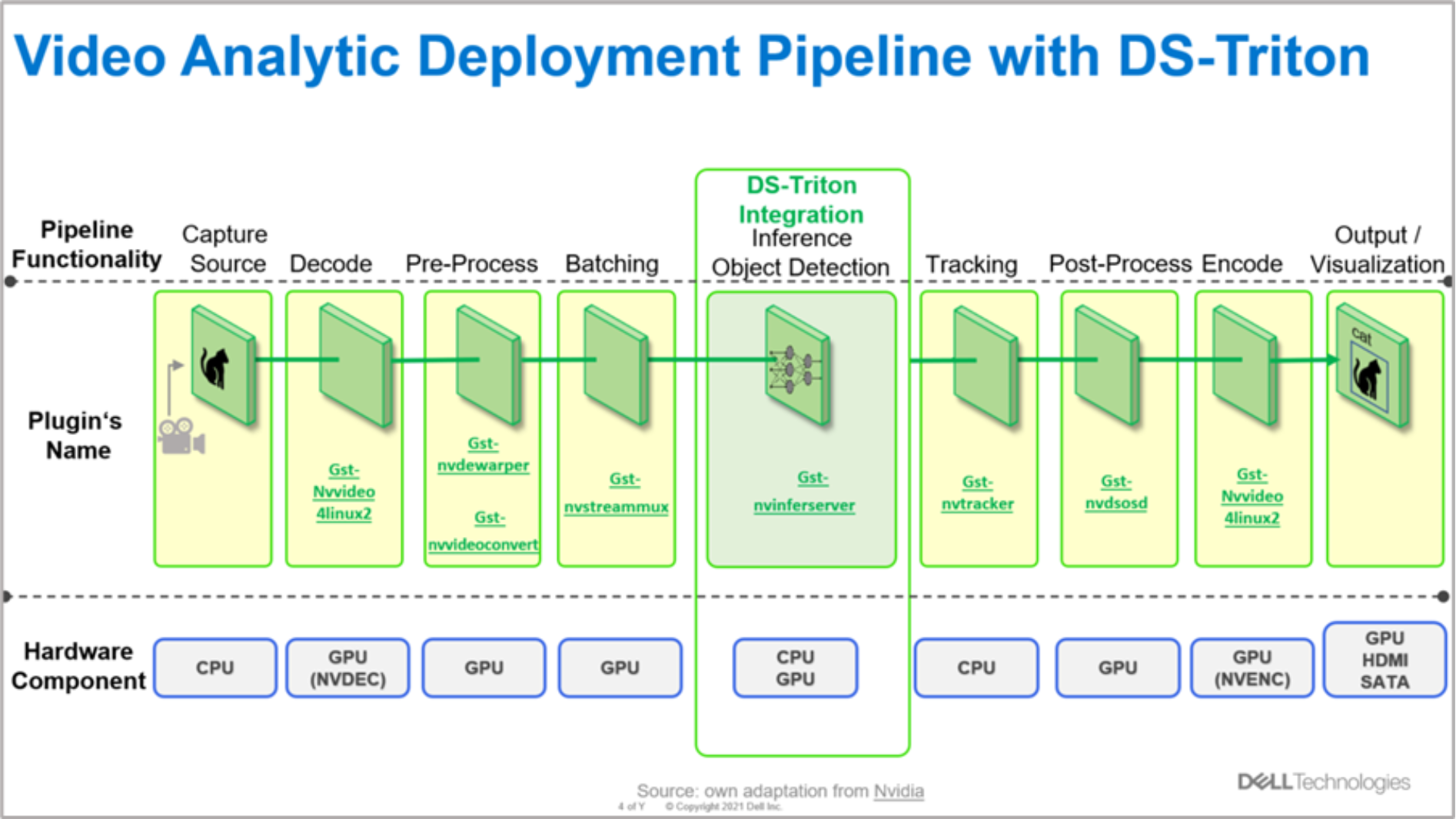

NVIDIA DeepStream and Triton integration | Developing and Deploying Vision AI with Dell and NVIDIA Metropolis | Dell Technologies Info Hub

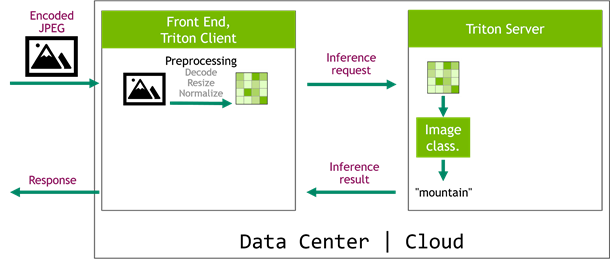

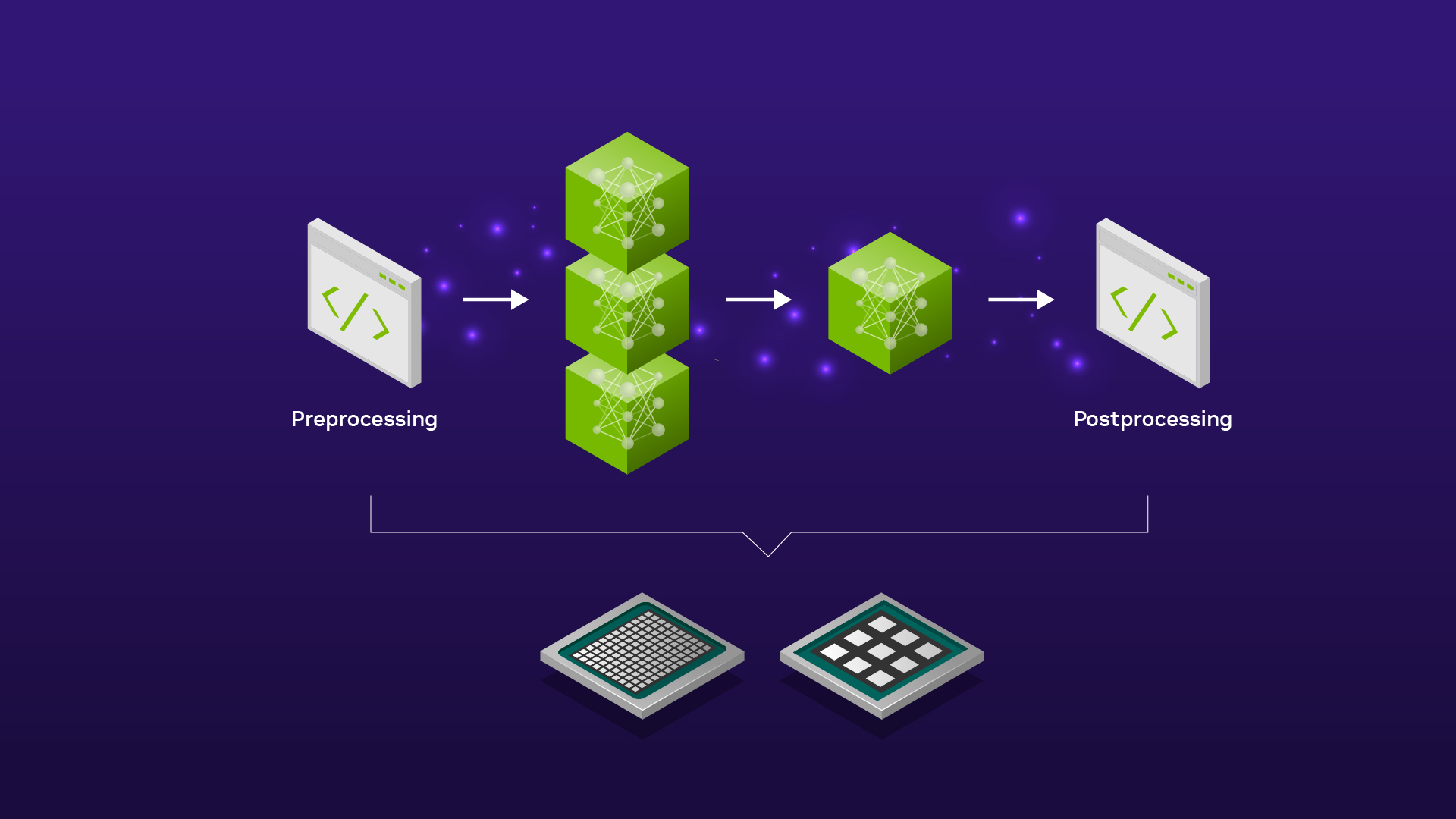

Serving ML Model Pipelines on NVIDIA Triton Inference Server with Ensemble Models | NVIDIA Technical Blog

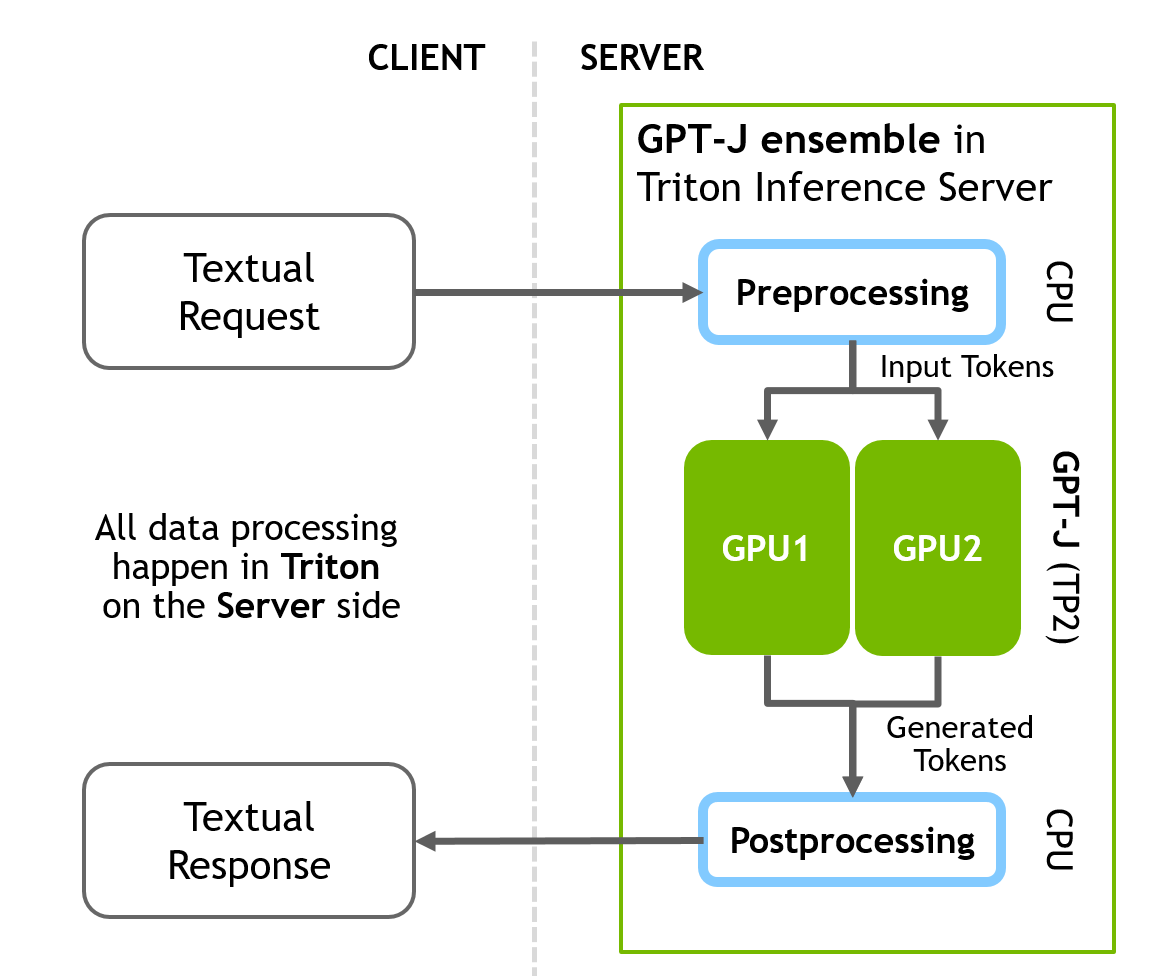

Serving Inference for LLMs: A Case Study with NVIDIA Triton Inference Server and Eleuther AI — CoreWeave